/s3.amazonaws.com/arc-wordpress-client-uploads/wweek/wp-content/uploads/2017/06/19192658/music_ShannonEntropy_4334.jpg)

Simulations are performed to examine the small- and moderate-sample properties of the proposed estimator and to compare the power of the proposed test with the power of competitors under a variety of alternatives. Entropy algorithms require input of what is normal, so on the back end it already has a sampling of the normal ordering of letters of words in the English language. It is, moreover, exploited to build a goodness-of-fit test for the beta-generated distribution and the distribution of order statistics. The estimator of Shannon entropy is defined and its consistency is studied. The proposed estimator of Shannon entropy of beta-generated distributions is motivated by the respective Vasicek’s estimator, as the latter one is tailored to the class of the beta-generated distributions and the distribution of order statistics. Beta-generated distributions are a broad class of univariate distributions which has received great attention during the last 15 years, as it obeys nice properties and it extends the distribution of order statistics.

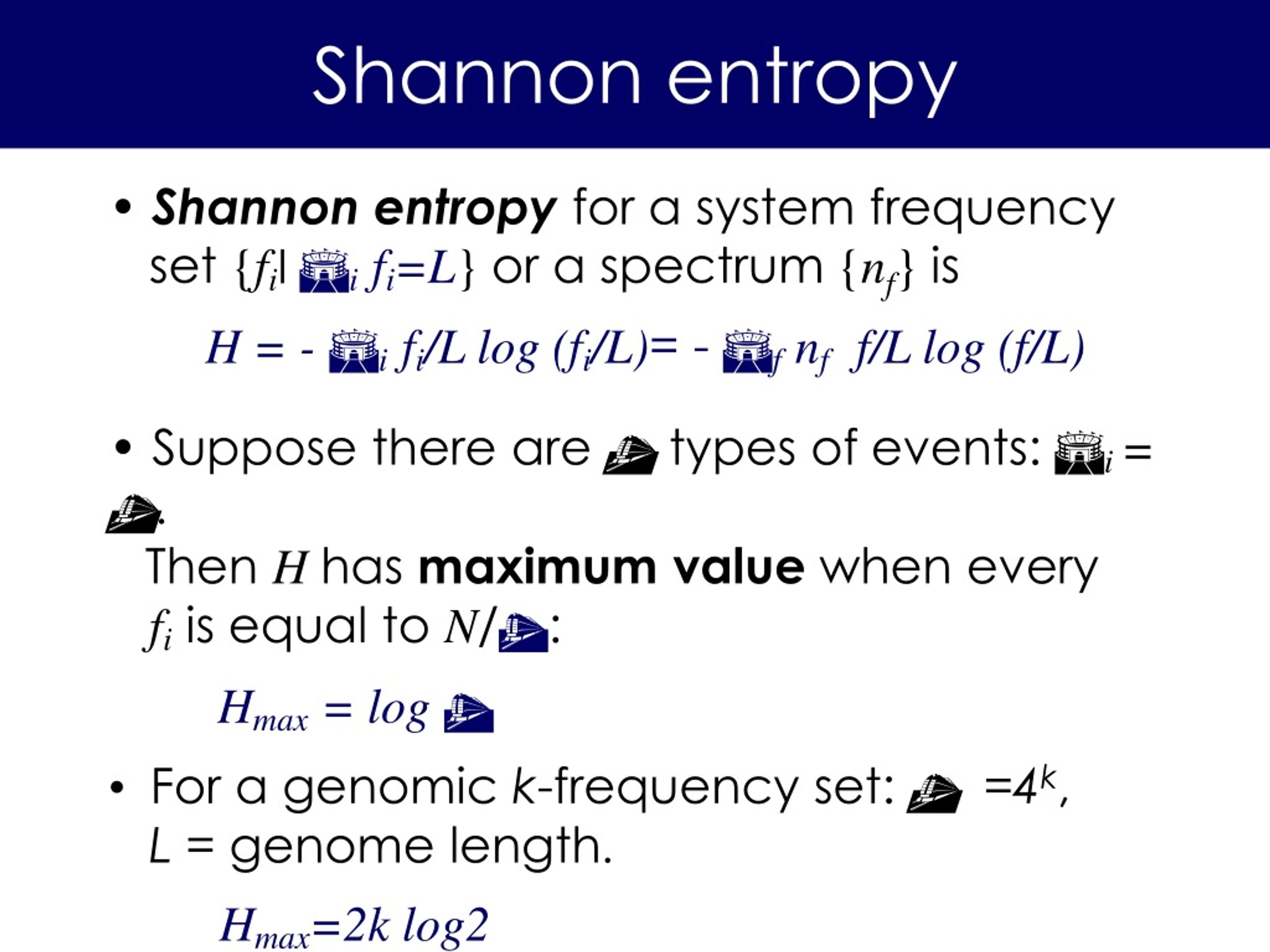

I hope this post helps you to see tools and methodologies one can use to find out unusual activity strictly based on the DNS traffic.The aim of this article is twofold: on the one hand to introduce and study some of the statistical properties of an estimator for the Shannon entropy and on the other hand to develop a goodness-of-fit test for beta-generated distributions and the distribution of order statistics. Shannon Entropy: Normalized Shannon Entropy: Please send the details of your project to, or call 51 and we will be happy to provide an immediate price quote. Sourcetype="isc:bind:query" | eval list="mozilla" | `ut_parse(query, list)` | `ut_shannon(ut_subdomain)` | table ut_shannon, query | sort ut_shannon descĪs you can see in the results here, the high score come from tunnels made to the domain as well as something which is unknown but could also be a tunnel: traffic towards Gray Information Systems Laboratory Electrical Engineering Department Stanford University. If we run the following query, interesting results are shown: Entropy and Information Theory First Edition, Corrected Robert M. Catching DNS tunnels from subdomains in URLs #SHANNON ENTROPY HOW TO#4.1 How to understand Shannon’s information entropy Entropy measures the degree of our lack of information about a system. We have changed their notation to avoid confusion. Unfortunately, in the information theory, the symbol for entropy is Hand the constant k B is absent. What is Entropy In a simple word, entropy means calculation of randomness within a variable. If we click on the ut_shannon field to sort in reverse order, this is what you could get:Īs one can see, words of low characters distribution get a low score. This expression is called Shannon Entropy or Information Entropy. Which can be run directly from any word you can have in Splunk:Īs you can see, the score is pretty high, which makes sense since there is a high variety of frequency over those data. #SHANNON ENTROPY CODE#The previous paragraph can easily be translated into the following Python code (taken from the excellent URL Toolbox on Splunkbase:Įntropy -= p * math.log(p, 2) # Log base 2 This entropy on the chosen word is defined as the average of the output weighted on the probability of occurrence of the characters. There will be an entropy for each character. If you consider a word, being a discrete source of the finite number of characters type which can be considered, for each possible character there will be a set of probabilities which would produce various outputs. He invented a great algorithm known as the Shannon Entropy which is useful to discover the statistical structure of a word or message. Shannon wrote the paper “ A Mathematical Theory of Communication“, which I strongly encourage to read for its clarity and amazing source of information. By Jacobs, Konrad -, CC BY-SA 2.0 de, Link

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed